前文

TensorRT-LLM正式出來有半個(gè)月了,一直沒有時(shí)間玩,周末趁著有時(shí)間跑一下。

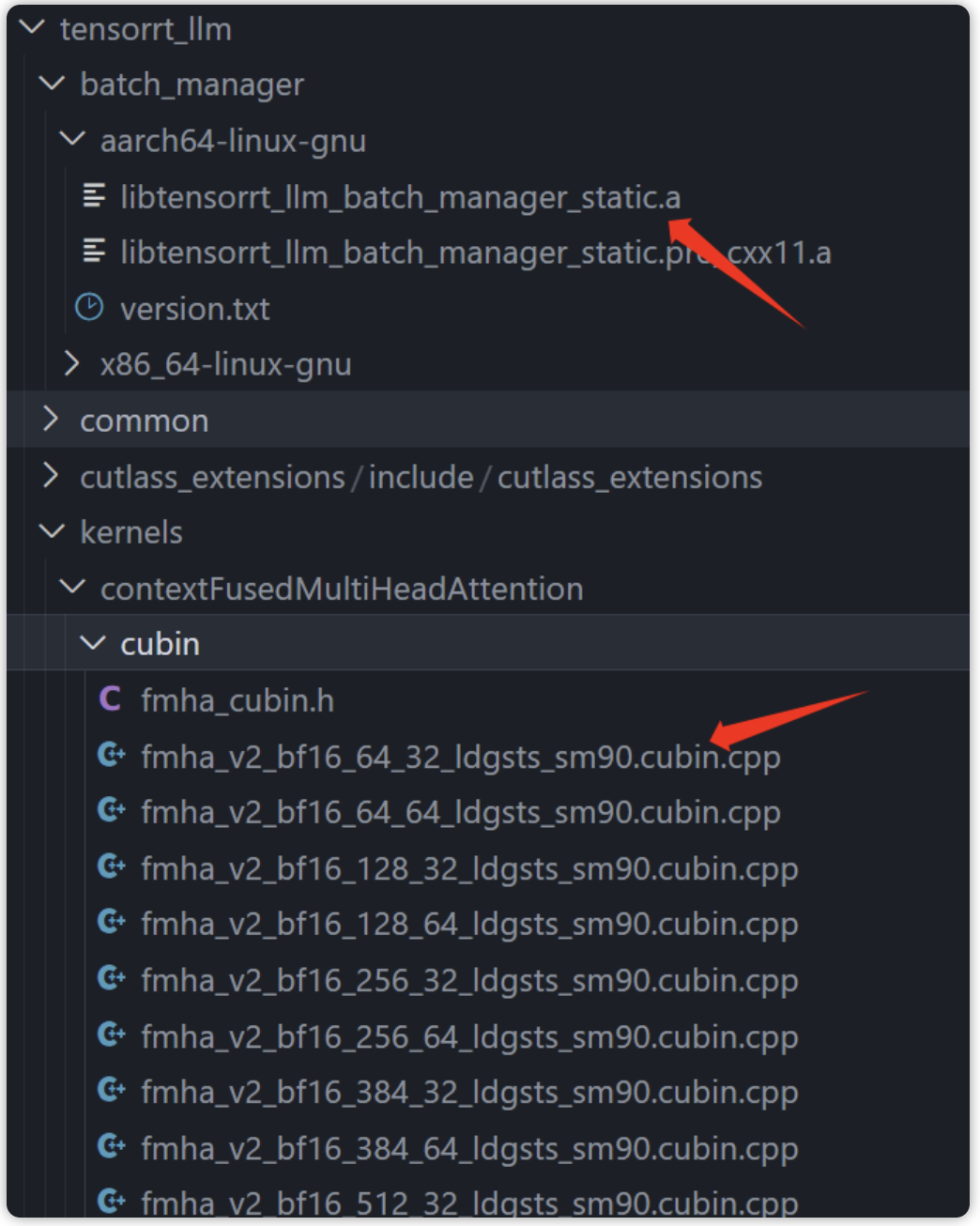

之前玩內(nèi)測(cè)版的時(shí)候就需要cuda-12.x,正式出來仍是需要cuda-12.x,主要是因?yàn)閠ensorr-llm中依賴的CUBIN(二進(jìn)制代碼)是基于cuda12.x編譯生成的,想要跑只能更新驅(qū)動(dòng)。

因此,想要快速跑TensorRT-LLM,建議直接將nvidia-driver升級(jí)到535.xxx,利用docker跑即可,省去自己折騰環(huán)境, 至于想要自定義修改源碼,也在docker中搞就可以 。

理論上替換原始代碼中的該部分就可以使用別的cuda版本了(batch manager只是不開源,和cuda版本應(yīng)該沒關(guān)系,主要是FMA模塊,另外TensorRT-llm依賴的TensorRT有cuda11.x版本,配合inflight_batcher_llm跑的triton-inference-server也和cuda12.x沒有強(qiáng)制依賴關(guān)系):

tensorrt-llm中預(yù)先編譯好的部分

說完環(huán)境要求,開始配環(huán)境吧!

搭建運(yùn)行環(huán)境以及庫

首先拉取鏡像,宿主機(jī)顯卡驅(qū)動(dòng)需要高于等于535:

docker pull nvcr.io/nvidia/tritonserver:23.10-trtllm-python-py3

這個(gè)鏡像是前幾天剛出的,包含了運(yùn)行TensorRT-LLM的所有環(huán)境(TensorRT、mpi、nvcc、nccl庫等等),省去自己配環(huán)境的煩惱。

拉下來鏡像后,啟動(dòng)鏡像:

docker run -it -d --cap-add=SYS_PTRACE --cap-add=SYS_ADMIN --security-opt seccomp=unconfined --gpus=all --shm-size=16g --privileged --ulimit memlock=-1 --name=develop nvcr.io/nvidia/tritonserver:23.10-trtllm-python-py3 bash

接下來的操作全在這個(gè)容器里。

編譯tensorrt-llm

首先獲取git倉庫,因?yàn)檫@個(gè)鏡像中 只有運(yùn)行需要的lib ,模型還是需要自行編譯的(因?yàn)橐蕾嚨腡ensorRT,用過trt的都知道需要構(gòu)建engine),所以首先編譯tensorrRT-LLM:

# TensorRT-LLM uses git-lfs, which needs to be installed in advance.

apt-get update && apt-get -y install git git-lfs

git clone https://github.com/NVIDIA/TensorRT-LLM.git

cd TensorRT-LLM

git submodule update --init --recursive

git lfs install

git lfs pull

然后進(jìn)入倉庫進(jìn)行編譯:

python3 ./scripts/build_wheel.py --trt_root /usr/local/tensorrt

一般不會(huì)有環(huán)境問題,這個(gè)docekr中已經(jīng)包含了所有需要的包,執(zhí)行build_wheel的時(shí)候會(huì)按照腳本中的步驟pip install一些需要的包,然后運(yùn)行cmake和make編譯文件:

..

adding 'tensorrt_llm/tools/plugin_gen/templates/functional.py.tpl'

adding 'tensorrt_llm/tools/plugin_gen/templates/plugin.cpp.tpl'

adding 'tensorrt_llm/tools/plugin_gen/templates/plugin.h.tpl'

adding 'tensorrt_llm/tools/plugin_gen/templates/plugin_common.cpp'

adding 'tensorrt_llm/tools/plugin_gen/templates/plugin_common.h'

adding 'tensorrt_llm/tools/plugin_gen/templates/tritonPlugins.cpp.tpl'

adding 'tensorrt_llm-0.5.0.dist-info/LICENSE'

adding 'tensorrt_llm-0.5.0.dist-info/METADATA'

adding 'tensorrt_llm-0.5.0.dist-info/WHEEL'

adding 'tensorrt_llm-0.5.0.dist-info/top_level.txt'

adding 'tensorrt_llm-0.5.0.dist-info/zip-safe'

adding 'tensorrt_llm-0.5.0.dist-info/RECORD'

removing build/bdist.linux-x86_64/wheel

Successfully built tensorrt_llm-0.5.0-py3-none-any.whl

然后pip install tensorrt_llm-0.5.0-py3-none-any.whl即可。

運(yùn)行

首先編譯模型,因?yàn)樽罱鼪]有下載新模型,還是拿舊的llama做例子。其實(shí)吧,其他llm也一樣(chatglm、qwen等等),只要trt-llm支持,編譯運(yùn)行方法都一樣的,在hugging face下載好要測(cè)試的模型即可。

這里我執(zhí)行:

python /work/code/TensorRT-LLM/examples/llama/build.py

--model_dir /work/models/GPT/LLAMA/llama-7b-hf # 可以替換為你自己的llm模型

--dtype float16

--remove_input_padding

--use_gpt_attention_plugin float16

--enable_context_fmha

--use_gemm_plugin float16

--use_inflight_batching # 開啟inflight batching

--output_dir /work/trtModel/llama/1-gpu

然后就是TensorRT的編譯、構(gòu)建engine的過程(因?yàn)槭褂昧藀lugin,編譯挺快的,這里我只用了一張A4000,所以沒有設(shè)置world_size,默認(rèn)為1),這里有很多細(xì)節(jié),后續(xù)會(huì)聊。

編譯好engine后,會(huì)生成/work/trtModel/llama/1-gpu,后續(xù)會(huì)用到。

執(zhí)行以下命令:

cd tensorrtllm_backend

mkdir triton_model_repo

# 拷貝出來模板模型文件夾

cp -r all_models/inflight_batcher_llm/* triton_model_repo/

# 將剛才生成好的`/work/trtModel/llama/1-gpu`移動(dòng)到模板模型文件夾中

cp /work/trtModel/llama/1-gpu/* triton_model_repo/tensorrt_llm/1

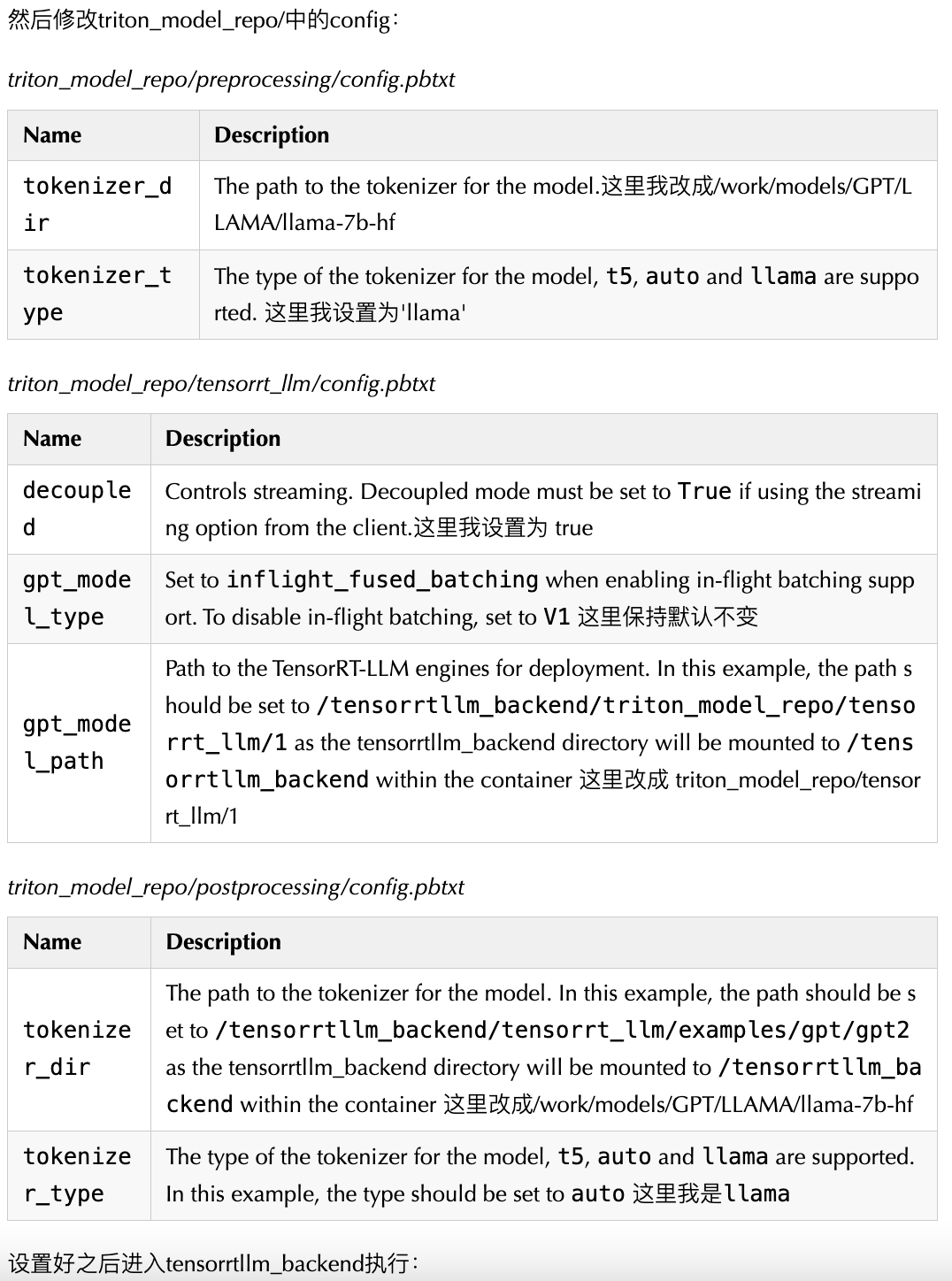

設(shè)置好之后進(jìn)入tensorrtllm_backend執(zhí)行:

python3 scripts/launch_triton_server.py --world_size=1 --model_repo=triton_model_repo

順利的話就會(huì)輸出:

root@6aaab84e59c0:/work/code/tensorrtllm_backend# I1105 14:16:58.286836 2561098 pinned_memory_manager.cc:241] Pinned memory pool is created at '0x7ffb76000000' with size 268435456

I1105 14:16:58.286973 2561098 cuda_memory_manager.cc:107] CUDA memory pool is created on device 0 with size 67108864

I1105 14:16:58.288120 2561098 model_lifecycle.cc:461] loading: tensorrt_llm:1

I1105 14:16:58.288135 2561098 model_lifecycle.cc:461] loading: preprocessing:1

I1105 14:16:58.288142 2561098 model_lifecycle.cc:461] loading: postprocessing:1

[TensorRT-LLM][WARNING] max_tokens_in_paged_kv_cache is not specified, will use default value

[TensorRT-LLM][WARNING] batch_scheduler_policy parameter was not found or is invalid (must be max_utilization or guaranteed_no_evict)

[TensorRT-LLM][WARNING] kv_cache_free_gpu_mem_fraction is not specified, will use default value of 0.85 or max_tokens_in_paged_kv_cache

[TensorRT-LLM][WARNING] max_num_sequences is not specified, will be set to the TRT engine max_batch_size

[TensorRT-LLM][WARNING] enable_trt_overlap is not specified, will be set to true

[TensorRT-LLM][WARNING] [json.exception.type_error.302] type must be number, but is null

[TensorRT-LLM][WARNING] Optional value for parameter max_num_tokens will not be set.

[TensorRT-LLM][INFO] Initializing MPI with thread mode 1

I1105 14:16:58.392915 2561098 python_be.cc:2199] TRITONBACKEND_ModelInstanceInitialize: postprocessing_0_0 (CPU device 0)

I1105 14:16:58.392979 2561098 python_be.cc:2199] TRITONBACKEND_ModelInstanceInitialize: preprocessing_0_0 (CPU device 0)

[TensorRT-LLM][INFO] MPI size: 1, rank: 0

I1105 14:16:58.732165 2561098 model_lifecycle.cc:818] successfully loaded 'postprocessing'

I1105 14:16:59.383255 2561098 model_lifecycle.cc:818] successfully loaded 'preprocessing'

[TensorRT-LLM][INFO] TRTGptModel maxNumSequences: 16

[TensorRT-LLM][INFO] TRTGptModel maxBatchSize: 8

[TensorRT-LLM][INFO] TRTGptModel enableTrtOverlap: 1

[TensorRT-LLM][INFO] Loaded engine size: 12856 MiB

[TensorRT-LLM][INFO] [MemUsageChange] Init cuBLAS/cuBLASLt: CPU +0, GPU +8, now: CPU 13144, GPU 13111 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] Init cuDNN: CPU +2, GPU +10, now: CPU 13146, GPU 13121 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] TensorRT-managed allocation in engine deserialization: CPU +0, GPU +12852, now: CPU 0, GPU 12852 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] Init cuBLAS/cuBLASLt: CPU +0, GPU +8, now: CPU 13164, GPU 14363 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] Init cuDNN: CPU +0, GPU +8, now: CPU 13164, GPU 14371 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] TensorRT-managed allocation in IExecutionContext creation: CPU +0, GPU +0, now: CPU 0, GPU 12852 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] Init cuBLAS/cuBLASLt: CPU +0, GPU +8, now: CPU 13198, GPU 14391 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] Init cuDNN: CPU +0, GPU +10, now: CPU 13198, GPU 14401 (MiB)

[TensorRT-LLM][INFO] [MemUsageChange] TensorRT-managed allocation in IExecutionContext creation: CPU +0, GPU +0, now: CPU 0, GPU 12852 (MiB)

[TensorRT-LLM][INFO] Using 2878 tokens in paged KV cache.

I1105 14:17:17.299293 2561098 model_lifecycle.cc:818] successfully loaded 'tensorrt_llm'

I1105 14:17:17.303661 2561098 model_lifecycle.cc:461] loading: ensemble:1

I1105 14:17:17.305897 2561098 model_lifecycle.cc:818] successfully loaded 'ensemble'

I1105 14:17:17.306051 2561098 server.cc:592]

+------------------+------+

| Repository Agent | Path |

+------------------+------+

+------------------+------+

I1105 14:17:17.306401 2561098 server.cc:619]

+-------------+-----------------------------------------------------------------+------------------------------------------------------------------------------------------------------+

| Backend | Path | Config |

+-------------+-----------------------------------------------------------------+------------------------------------------------------------------------------------------------------+

| tensorrtllm | /opt/tritonserver/backends/tensorrtllm/libtriton_tensorrtllm.so | {"cmdline":{"auto-complete-config":"false","backend-directory":"/opt/tritonserver/backends","min-com |

| | | pute-capability":"6.000000","default-max-batch-size":"4"}} |

| python | /opt/tritonserver/backends/python/libtriton_python.so | {"cmdline":{"auto-complete-config":"false","backend-directory":"/opt/tritonserver/backends","min-com |

| | | pute-capability":"6.000000","shm-region-prefix-name":"prefix0_","default-max-batch-size":"4"}} |

+-------------+-----------------------------------------------------------------+------------------------------------------------------------------------------------------------------+

I1105 14:17:17.307053 2561098 server.cc:662]

+----------------+---------+--------+

| Model | Version | Status |

+----------------+---------+--------+

| ensemble | 1 | READY |

| postprocessing | 1 | READY |

| preprocessing | 1 | READY |

| tensorrt_llm | 1 | READY |

+----------------+---------+--------+

I1105 14:17:17.393318 2561098 metrics.cc:817] Collecting metrics for GPU 0: NVIDIA RTX A4000

I1105 14:17:17.393534 2561098 metrics.cc:710] Collecting CPU metrics

I1105 14:17:17.394550 2561098 tritonserver.cc:2458]

+----------------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------+

| Option | Value |

+----------------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------+

| server_id | triton |

| server_version | 2.39.0 |

| server_extensions | classification sequence model_repository model_repository(unload_dependents) schedule_policy model_configuration system_shared_memory cuda_shared_ |

| | memory binary_tensor_data parameters statistics trace logging |

| model_repository_path[0] | /work/triton_models/inflight_batcher_llm |

| model_control_mode | MODE_NONE |

| strict_model_config | 1 |

| rate_limit | OFF |

| pinned_memory_pool_byte_size | 268435456 |

| cuda_memory_pool_byte_size{0} | 67108864 |

| min_supported_compute_capability | 6.0 |

| strict_readiness | 1 |

| exit_timeout | 30 |

| cache_enabled | 0 |

+----------------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------+

I1105 14:17:17.423479 2561098 grpc_server.cc:2513] Started GRPCInferenceService at 0.0.0.0:8001

I1105 14:17:17.424418 2561098 http_server.cc:4497] Started HTTPService at 0.0.0.0:8000

這時(shí)也就啟動(dòng)了triton-inference-server,后端就是TensorRT-LLM。

可以看到LLAMA-7B-FP16精度版本,占用顯存為:

+---------------------------------------------------------------------------------------+

Sun Nov 5 14:20:46 2023

+---------------------------------------------------------------------------------------+

| NVIDIA-SMI 535.113.01 Driver Version: 535.113.01 CUDA Version: 12.2 |

|-----------------------------------------+----------------------+----------------------+

| GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+======================+======================|

| 0 NVIDIA RTX A4000 Off | 00000000:01:00.0 Off | Off |

| 41% 34C P8 16W / 140W | 15855MiB / 16376MiB | 0% Default |

| | | N/A |

+-----------------------------------------+----------------------+----------------------+

+---------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=======================================================================================|

+---------------------------------------------------------------------------------------+

客戶端

然后我們請(qǐng)求一下吧,先走h(yuǎn)ttp接口:

# 執(zhí)行

curl -X POST localhost:8000/v2/models/ensemble/generate -d '{"text_input": "What is machine learning?", "max_tokens": 20, "bad_words": "", "stop_words": ""}'

# 得到返回結(jié)果

{"model_name":"ensemble","model_version":"1","sequence_end":false,"sequence_id":0,"sequence_start":false,"text_output":" ? What is machine learning? Machine learning is a subfield of computer science that focuses on the development of algorithms that can learn"}

triton目前不支持SSE方法,想stream可以使用grpc協(xié)議,官方也提供了grpc的方法,首先安裝triton客戶端:

pip install tritonclient[all]

然后執(zhí)行:

python3 inflight_batcher_llm/client/inflight_batcher_llm_client.py --request-output-len 200 --tokenizer_dir /work/models/GPT/LLAMA/llama-7b-hf --tokenizer_type llama --streaming

請(qǐng)求后可以看到是一個(gè)token一個(gè)token返回的,也就是我們使用chatgpt3.5時(shí),一個(gè)字一個(gè)字蹦的意思:

...

[29953]

[29941]

[511]

[450]

[315]

[4664]

[457]

[310]

output_ids = [[0, 19298, 297, 6641, 29899, 23027, 3444, 29892, 1105, 7598, 16370, 408, 263, 14547, 297, 3681, 1434, 8401, 304, 4517, 297, 29871, 29896, 29947, 29946, 29955, 29889, 940, 3796, 472, 278, 23933, 5977, 322, 278, 7021, 16923, 297, 29258, 265, 1434, 8718, 670, 1914, 27144, 297, 29871, 29896, 29947, 29945, 29896, 29889, 940, 471, 263, 29323, 261, 310, 278, 671, 310, 21837, 7984, 292, 322, 471, 278, 937, 304, 671, 263, 10489, 380, 994, 29889, 940, 471, 884, 263, 410, 29880, 928, 9227, 322, 670, 8277, 5134, 450, 315, 4664, 457, 310, 3444, 313, 29896, 29947, 29945, 29896, 511, 450, 315, 4664, 457, 310, 12730, 313, 29896, 29947, 29945, 29946, 511, 450, 315, 4664, 457, 310, 13616, 313, 29896, 29947, 29945, 29945, 511, 450, 315, 4664, 457, 310, 9556, 313, 29896, 29947, 29945, 29955, 511, 450, 315, 4664, 457, 310, 17362, 313, 29896, 29947, 29945, 29947, 511, 450, 315, 4664, 457, 310, 12710, 313, 29896, 29947, 29945, 29929, 511, 450, 315, 4664, 457, 310, 14198, 653, 313, 29896, 29947, 29953, 29900, 511, 450, 315, 4664, 457, 310, 28806, 313, 29896, 29947, 29953, 29896, 511, 450, 315, 4664, 457, 310, 27440, 313, 29896, 29947, 29953, 29906, 511, 450, 315, 4664, 457, 310, 24506, 313, 29896, 29947, 29953, 29941, 511, 450, 315, 4664, 457, 310]]

Input: Born in north-east France, Soyer trained as a

Output: chef in Paris before moving to London in 1 847. He worked at the Reform Club and the Royal Hotel in Brighton before opening his own restaurant in 1 851 . He was a pioneer of the use of steam cooking and was the first to use a gas stove. He was also a prolific writer and his books included The Cuisine of France (1 851 ), The Cuisine of Italy (1 854), The Cuisine of Spain (1 855), The Cuisine of Germany (1 857), The Cuisine of Austria (1 858), The Cuisine of Russia (1 859), The Cuisine of Hungary (1 860), The Cuisine of Switzerland (1 861 ), The Cuisine of Norway (1 862), The Cuisine of Sweden (1863), The Cuisine of

因?yàn)殚_了inflight batching,其實(shí)可以同時(shí)多個(gè)請(qǐng)求打過來,修改request_id不要一樣就可以:

# user 1

python3 inflight_batcher_llm/client/inflight_batcher_llm_client.py --request-output-len 200 --tokenizer_dir /work/models/GPT/LLAMA/llama-7b-hf --tokenizer_type llama --streaming --request_id 1

# user 2

python3 inflight_batcher_llm/client/inflight_batcher_llm_client.py --request-output-len 200 --tokenizer_dir /work/models/GPT/LLAMA/llama-7b-hf --tokenizer_type llama --streaming --request_id 2

至此就快速過完整個(gè)TensorRT-LLM的運(yùn)行流程。

使用建議

非常建議使用docker,人生苦短。

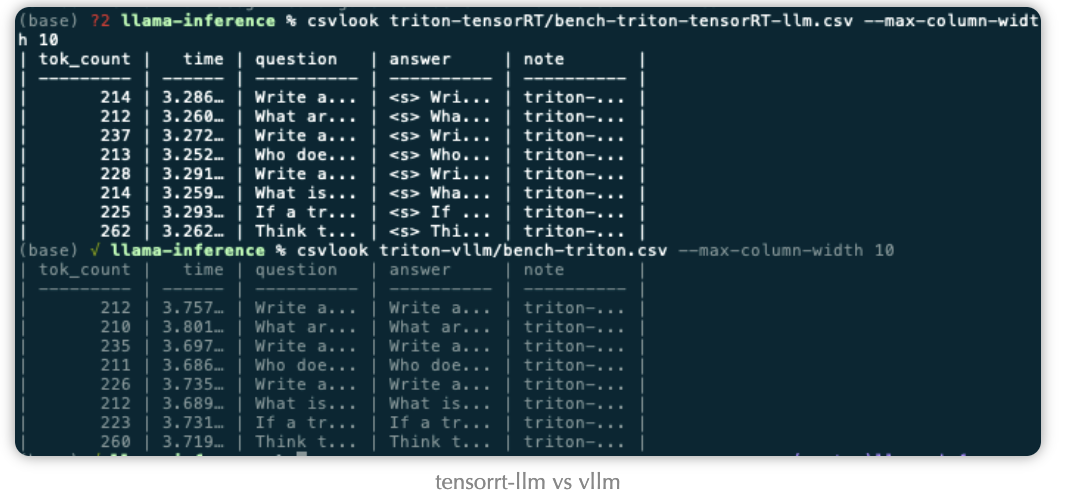

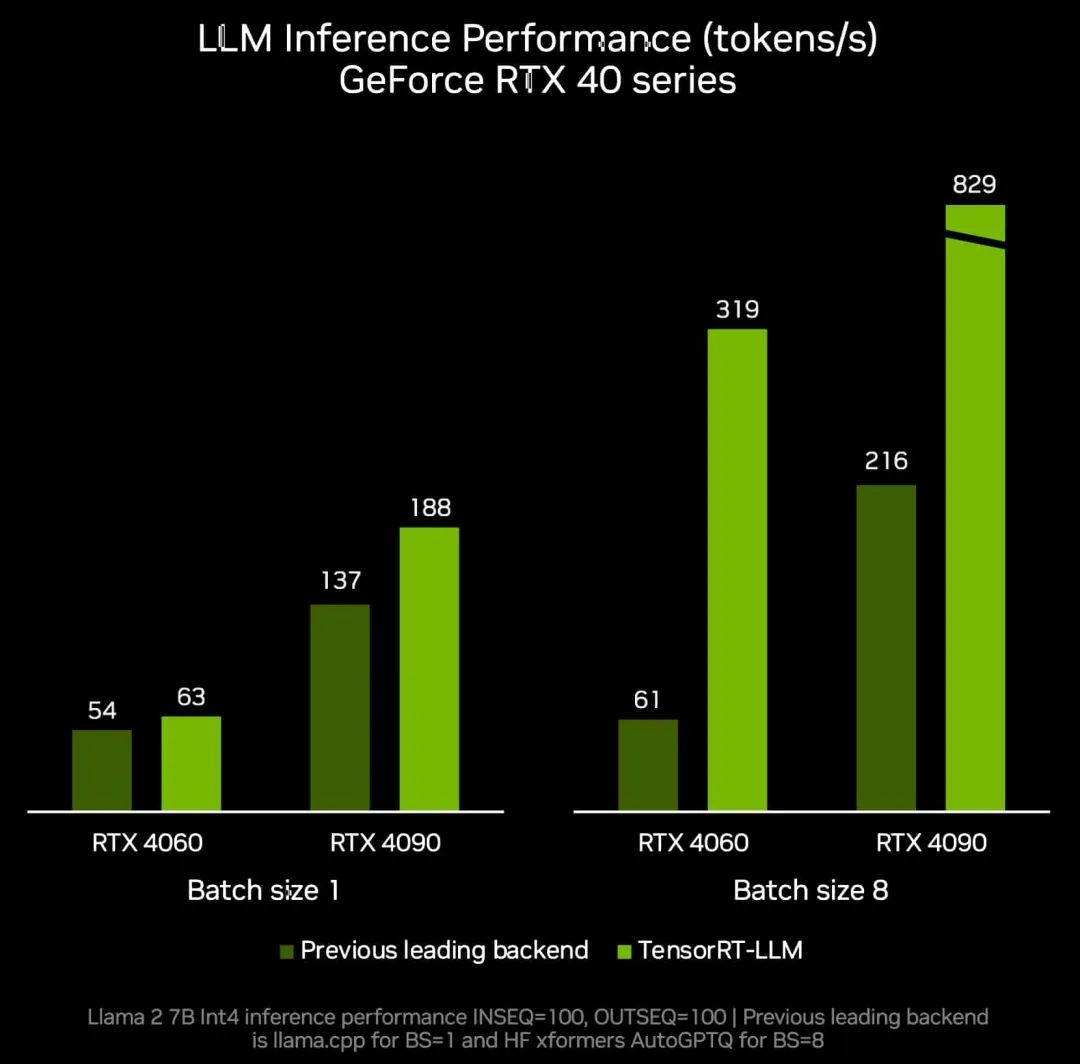

在我們實(shí)際使用中,vllm在batch較大的場(chǎng)景并不慢,利用率也能打滿。TensorRT-LLM和vllm的速度在某些模型上快某些模型上慢,各有優(yōu)劣。

TensorRT-LLM的特點(diǎn)就是借助TensorRT,TensorRT后續(xù)更新越快,支持特性越牛逼,TensorRT-LLM也就越牛逼。靈活性上,我感覺vllm和TensorRT-LLM不分上下,加上大模型的結(jié)構(gòu)其實(shí)都差不多,甚至TensorRT-LLM都沒有上onnx-parser,在后續(xù)更新模型上,python快速搭建模型效率也都差不了多少。

-

python

+關(guān)注

關(guān)注

56文章

4825瀏覽量

86226 -

GPU芯片

+關(guān)注

關(guān)注

1文章

305瀏覽量

6124 -

HTTP接口

+關(guān)注

關(guān)注

0文章

21瀏覽量

1948 -

ChatGPT

+關(guān)注

關(guān)注

29文章

1588瀏覽量

8810

發(fā)布評(píng)論請(qǐng)先 登錄

【算能RADXA微服務(wù)器試用體驗(yàn)】+ GPT語音與視覺交互:1,LLM部署

無法在OVMS上運(yùn)行來自Meta的大型語言模型 (LLM),為什么?

現(xiàn)已公開發(fā)布!歡迎使用 NVIDIA TensorRT-LLM 優(yōu)化大語言模型推理

淺析tensorrt-llm搭建運(yùn)行環(huán)境以及庫

點(diǎn)亮未來:TensorRT-LLM 更新加速 AI 推理性能,支持在 RTX 驅(qū)動(dòng)的 Windows PC 上運(yùn)行新模型

LLaMA 2是什么?LLaMA 2背后的研究工作

NVIDIA加速微軟最新的Phi-3 Mini開源語言模型

高通支持Meta Llama 3在驍龍終端上運(yùn)行

Meta發(fā)布基于Code Llama的LLM編譯器

魔搭社區(qū)借助NVIDIA TensorRT-LLM提升LLM推理效率

TensorRT-LLM低精度推理優(yōu)化

使用NVIDIA TensorRT提升Llama 3.2性能

NVIDIA TensorRT-LLM Roadmap現(xiàn)已在GitHub上公開發(fā)布

解鎖NVIDIA TensorRT-LLM的卓越性能

在NVIDIA TensorRT-LLM中啟用ReDrafter的一些變化

TensorRT-LLM初探(一)運(yùn)行l(wèi)lama

TensorRT-LLM初探(一)運(yùn)行l(wèi)lama

評(píng)論